Indexing of all files inside a folder and all its subfolders can be done using java language in the following ways:

1. Using data import handler.

2. Without data import handler i.e by creating a recursive function in java code.

These methods are discussed below:

1. Using Data import Handler and calling it with java code:

Create an XML file say data-config.xml and keep it on location where user on whose behalf Apache Solr is running has permissions to access this file. If permissions are not correct then it will not work properly.

This file defines the data source type and specifies the base folder from which files have to be taken for indexing. The TikaEntityProcessor uses Apache Tika to process incoming documents.

fileName=”.* specifies that files of all extension will be picked up for indexing:

data-config.xml

<dataConfig>

<dataSource type="BinFileDataSource" />

<document>

<entity name="files"

dataSource="null"

rootEntity="false"

processor="FileListEntityProcessor"

baseDir="/var/www/html/solr_docs" fileName=".*"

recursive="true"

onError="skip">

<field column="fileAbsolutePath" name="id" />

<field column="fileSize" name="size" />

<field column="fileLastModified" name="lastModified" />

<entity name="custom_table" dataSource="solrtest" transformer="script:addfield"

query="select key,value from custom_table where id=${item.id}">

</entity>

<entity

name="documentImport"

processor="TikaEntityProcessor"

url="${files.fileAbsolutePath}"

format="text">

<field column="file" name="fileName"/>

<field column="Author" name="author" meta="true"/>

<field column="text" name="text"/>

</entity>

</entity>

</document>

</dataConfig>

Provide a link of data-config.xml file in the Solrconfig.xml file located in the solr installation folder. Solr-dataimporthandler-x.x.x.jar and solr-dataimporthandler-extras-6.2.0 files must be located in the dist folder of solr-x.x.x installation directory (if not there, then must be downloaded) and must be referenced as shown in the first line with the below. The request handler for data import must be defined somewhere below any other request handlers in Solrconfig.xml file. A complete path of the data-config.xml must be defined in the request handler.

Solrconfig.xml

→

************************************************************************

/Path-to-data-config.xml file/data-config.xml

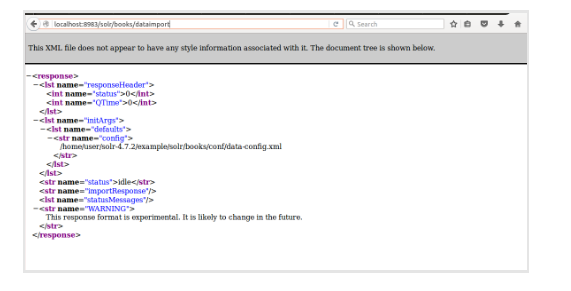

To check if dataimport handler started working, type in the browser URL,

http://localhost:8983/solr/books/dataimport

Following screen will be displayed:

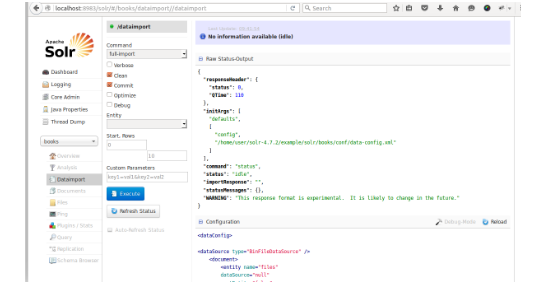

The UI for the dataimport handler section will appear as follows:

In the java language, following code can used to call import handler for indexing all the files at destination folder path (specified at /var/www/html/solr_docs in data-config.xml file):

import java.io.IOException;

import java.net.MalformedURLException;

import org.apache.solr.client.solrj.SolrServerException;

import org.apache.solr.client.solrj.impl.CommonsHttpSolrServer;

import org.apache.solr.client.solrj.request.QueryRequest;

import org.apache.solr.common.params.ModifiableSolrParams;

public class Dataimporthandler {

public static void main(String[] args) throws SolrServerException, IOException {

CommonsHttpSolrServer server = new CommonsHttpSolrServer("http://localhost:8983/solr/books");

ModifiableSolrParams params = new ModifiableSolrParams();

params.set("command", "full-import");

QueryRequest request = new QueryRequest(params);

request.setPath("/dataimport");

server.request(request);

}

}

2. Indexing through recursive function in java code

Indexing can done by recursively traversing through files and subfolders using the recursive function calls for eg: showFiles() in this program:

public class uploadfiles {

Static int count = 1;

///start of main()*********************************************

public static void main(String[] args) throws IOException, SolrServerException {

File[] files = new File("/var/www/html/solr_docs/").listFiles();

showFiles(files);

} // end of main()************************************************

public static void showFiles(File[] files) throws IOException, SolrServerException {

String file_name, file_path, file_ext;;

String solr_id;

for (File file: files) {

if (file.isDirectory()) {

System.out.println("*********Directory: ********************" + file.getName());

showFiles(file.listFiles());

System.out.println("**************************************** ");

} else {

file_name = file.getName();

file_path = file.getAbsolutePath();

file_ext = FilenameUtils.getExtension(file_name);

// calling indexing function here

indexFilesSolrCell(file_name, file_path, file_ext);

}

}

}

public static void indexFilesSolrCell(String fileName, String Path, String extension) throws IOException, SolrServerException {

File f1 = new File(Path);

String urlString = "http://localhost:8983/solr/books";

SolrServer solr = new CommonsHttpSolrServer(urlString);

ContentStreamUpdateRequest up

= new ContentStreamUpdateRequest("/update/extract");

up.addFile(f1, extension);

up.setParam("literal.id", count);

up.setParam("literal.filename", fileName);

up.setParam("uprefix", "attr_");

up.setParam("fmap.content", "attr_content");

up.setAction(AbstractUpdateRequest.ACTION.COMMIT, true, true);

solr.request(up);

counter++;

}

} //end of class